The Upskilling Industrial Complex

A roughly $400 billion market has organized itself around the appearance of solving a problem it has little structural incentive to actually solve.

TL;DR: The global workforce development market is worth roughly $400 billion and growing. It is also, measured against its stated purpose of helping workers survive economic disruption, largely not working. The reasons are structural, not accidental. The vendors, the employers, and the government programs all have the wrong incentives — and until that changes, spending more money on the same system will produce the same results.

Note: this author has a long-standing belief that formal classroom or online learning is mostly 1) employee engagement disguised as learning, 2) a check-the-box exercise for compliance, or 3) the easy path on “action” which doesn’t effectively change anything. So, this article isn’t unbiased.

There is a version of this story that sounds like a success.

The global corporate training market is estimated at roughly $400 billion in 2024, depending on how you measure it. LinkedIn Learning has 27 million learners. Coursera has 148 million registered users. Governments around the world have launched workforce development initiatives backed by hundreds of millions of dollars. Every major consulting firm has a workforce transformation practice. Every major tech company has an upskilling commitment with a number attached to it. Amazon has pledged $1.2 billion to train 300,000 workers. Microsoft has committed to training 2.5 million people in digital skills across 25 countries. The investment, the urgency, and the vocabulary are all there.

And underneath all of it is the hum of a specific anxiety. Generative AI has produced the kind of public alarm that demands a visible institutional response. Upskilling is that response. It is legible, announceable, and fundable. A company facing questions about automation layoffs can point to a training program. A government facing questions about displaced workers can point to a workforce development initiative. A consulting firm can propose a reskilling strategy. The market has organized itself to supply exactly what the moment demands: the appearance of action at scale. Whether the action produces the promised results is a different question, and one the market has not been structured to answer.

The results, where we can measure them, are not encouraging. Research consistently shows that only 5 to 15% of learners who start self-paced online courses actually finish them. The Burning Glass Institute, having analyzed 65 million career records, found that only 1 in 8 credentials in the current marketplace deliver material wage gains for workers. The 2025 graduates who cannot find entry-level work are still not finding it. The 1.4 million U.S. workers who left manufacturing between 2000 and 2010 largely did not retrain into the knowledge economy. The IMF estimates that over 40% of the global workforce will need significant upskilling by 2030. The programs that exist today are reaching a fraction of that population, and the fraction they are reaching is not acquiring the skills that the labor market is actually buying.

The gap between the money flowing into workforce development and the outcomes flowing out of it is not a funding problem. It is a design problem, and the design problem is not accidental. It is structural.

What the Market Is Actually Optimizing For

The workforce development market does not get paid for outcomes. It gets paid for activity.

Corporate L&D budgets are measured by training hours completed, courses offered, completion rates, and learner satisfaction scores. The question that almost never gets asked with rigor is whether the person who completed the training does their job differently afterward, and whether the organization performs better as a result. This is not because learning and development professionals do not care about outcomes. Many of them care deeply. It is because the measurement infrastructure does not exist at most organizations, and because executives who fund training programs are rarely around long enough to see the long-term payoff. The training budget is justified at the beginning of the year and evaluated at the end, and the evaluation is built on inputs.

The ed-tech and bootcamp sector has a similar problem, and the incentive structure is even more direct. Companies like Coursera, Udemy, and LinkedIn Learning are subscription or per-course businesses. Their revenue grows when more people enroll. Completion and job placement, where they are tracked at all, are marketing metrics more than product accountability metrics. The flagship bootcamps that promised six-figure salaries to graduates after 12 weeks of coding instruction have had their accountability claims tested by independent researchers, and the results are significantly less impressive than the brochure. A 2021 study by the National Student Clearinghouse found that many coding bootcamp graduates did not work in tech within two years of completing their programs. The programs that publish income-share agreements have slightly better accountability built into the model, but they are the exception in an industry that largely sells transformation and measures enrollment.

Government workforce development programs have perhaps the most institutionalized version of this problem. Workforce Innovation and Opportunity Act funding in the United States flows through a network of state workforce agencies, local workforce boards, community colleges, and approved training providers. The accountability framework requires states to report on employment rates and median earnings at specific intervals after program completion. That sounds like outcomes accountability. In practice, the approved training provider lists are often outdated, the wage targets are set against regional medians rather than the specific jobs workers are training for, and the programs that receive the most funding are not always the programs that produce the best labor market results. A 2019 GAO report found that DOL had not determined whether WIOA training programs were actually effective. A 2024 Urban Institute analysis found persistent gaps between the skills community college and workforce training programs deliver and the skills local employers say they need.

The three segments of the market are distinct. Their dysfunction is the same. They have organized themselves around the evidence of effort rather than the fact of outcome.

The Skills Mismatch Is Not What You Think

The standard narrative about workforce development failure invokes the skills gap: companies cannot find workers with the right skills, workers cannot find jobs that match what they learned, and the solution is to close the distance between supply and demand. More AI courses. More cloud certifications. More digital literacy training. The training market sells this narrative because it is the one that generates the next round of purchases.

The actual skills mismatch is more precise and harder to paper over with coursework.

What employers consistently say they cannot find is not people who have completed courses in a technology. It is people who can apply knowledge in context, who can work across ambiguity, who have the judgment to know when the AI output is wrong and why. The World Economic Forum’s Future of Jobs 2025 report ranks analytical thinking, creative thinking, resilience, and leadership among the most in-demand skills of the coming decade. These are not skills that transfer through video lectures. They develop through practice, in conditions of real consequence, with feedback from people who can see the quality of the judgment being exercised

The deepest problem in workforce development is not that workers lack credentials. It is that credentials have been substituted for competence as the unit of exchange, and the market has responded by selling more credentials. A worker who completes a Google AI certification has not become an AI practitioner. A manufacturing worker who finishes a 16-week coding bootcamp has not become a software engineer. The programs that successfully bridge that gap are long, expensive, deeply connected to employers, and available at nowhere near the scale the moment requires.

What makes skills development actually work, when it works, is proximity to real work, feedback tied to specific performance, and enough time for the learning to consolidate into practice. None of those things scale cheaply. Which is why the market has largely built something that does scale cheaply and called it upskilling.

The Vendor-Employer Codependency

There is a dynamic worth naming that does not get discussed often enough.

Large employers do not have an incentive to be rigorous about their workforce development ROI because workforce development is partly a narrative asset. The company that announces a $50 million commitment to reskill its workforce is making a statement about its values and its relationship to the communities where it operates. That statement has real value in the current political environment, where automation layoffs attract scrutiny and companies are under pressure to demonstrate that they are not simply extracting value from workers while replacing them with machines. The size of the commitment is the message. The outcomes are not the point.

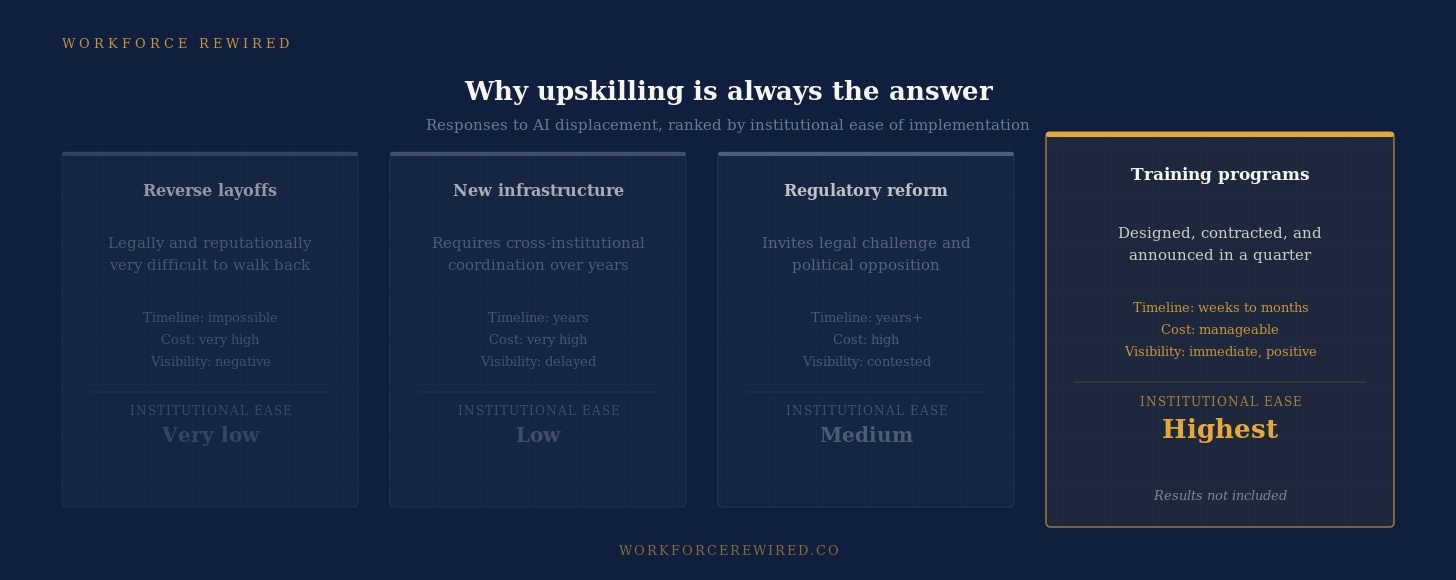

This is, in part, what makes upskilling so durable as a corporate and political response: it is the easiest available action. Layoff announcements are hard to walk back. Infrastructure takes years and requires coordination across institutions. Regulatory responses invite legal challenge. A training program can be designed, contracted, and announced in a quarter. It generates enrollment metrics quickly. It satisfies the demand for visible response without requiring anyone to wait for labor market evidence. In an environment where companies and governments face genuine pressure to show they are doing something about AI displacement, training programs offer a solution at exactly the right speed.

This creates a ready market for vendors who specialize in delivering large-scale, impressive-sounding programs that generate good numbers on the activity metrics that get reported in earnings calls and ESG disclosures. The vendors are not lying. The courses are real. The learners are real. The completion certificates are real. Whether the skills transfer to meaningful labor market mobility is the question that neither the employer nor the vendor needs to answer, because neither is being held accountable for it.

Government programs have a version of the same problem at the political level. A workforce development investment is a visible, cuttable ribbon. A funding commitment makes news when it is announced. Measuring whether workers are actually better off three years later requires methodology, data infrastructure, and political will to report the results even when they are disappointing. The infrastructure is weak and the will is intermittent.

The result is a roughly $400 billion market that is very good at producing the appearance of response to a genuine crisis and considerably less reliable at producing the response itself.

What (Might) Actually Work

Here is where I am going to resist the impulse to give a clean answer, because I do not think a clean answer is honest.

The evidence does suggest some things. Programs with employer co-design, where training curricula are built with and for specific hiring employers rather than for a generic labor market, consistently outperform generic ones. Registered apprenticeship models, which integrate learning into employment and pay people while they develop, have measurably better labor market outcomes than classroom equivalents. Pay-for-outcomes contracting, where vendors only get paid when workers secure and retain jobs above a certain wage floor, aligns incentives in ways that enrollment-based models cannot. Income-share agreements, when structured honestly and with income protections for students who do not succeed, at least force providers to care about what happens after the training ends.

But scaling any of these is hard, and the reasons they have not scaled are not primarily technical. They are economic and political. Employers resist the cost and commitment of co-designed apprenticeships. Vendors resist the risk of pay-for-outcomes contracting. Governments resist the complexity of accountability systems that require multi-year tracking and honest reporting of programs that do not perform.

The inconvenient conclusion that the evidence keeps pointing toward is that the skills people actually need in this economy develop through working, not through training for work. The training market has built an industry on the premise that you can replicate that development efficiently and at scale through coursework. The data, accumulated now across decades, suggests you largely cannot.

The roughly $400 billion is real. The problem it claims to be solving is real. The gap between where the solution should be them should alarm anyone who believes that workforce transitions can be managed rather than just endured.

Whether the market that profits from the gap can be reformed to close it is a question worth sitting with longer than the next funding announcement cycle allows.

If this resonated, share it with someone who is making decisions about learning and workforce development spending. And if you are inside an organization trying to figure out how to do this better, I would genuinely like to hear from you: christina@workforcerewired.co