The Entry-Level Crisis: What Happens to Career Development When AI Does the Apprenticeship Work?

You can't skip the beginning and expect to get to the middle (or the top).

There is a question I started thinking about when AI first arrived on the scene, and I have not stopped thinking about since. It is not about net job counts or automation percentages or wage premiums for AI-fluent workers. It is simpler and, I think, more consequential than any of those.

When AI absorbs the entry-level tasks that used to teach people how to work in an organization, where does the ladder go?

I am not asking this rhetorically. I am asking it because no one has a satisfying answer, and the absence of a satisfying answer is starting to show up in the data in ways that should alarm every senior leader, university administrator, and workforce policymaker paying attention.

The Disappearing Bottom Rung

Let’s start with what the numbers actually say.

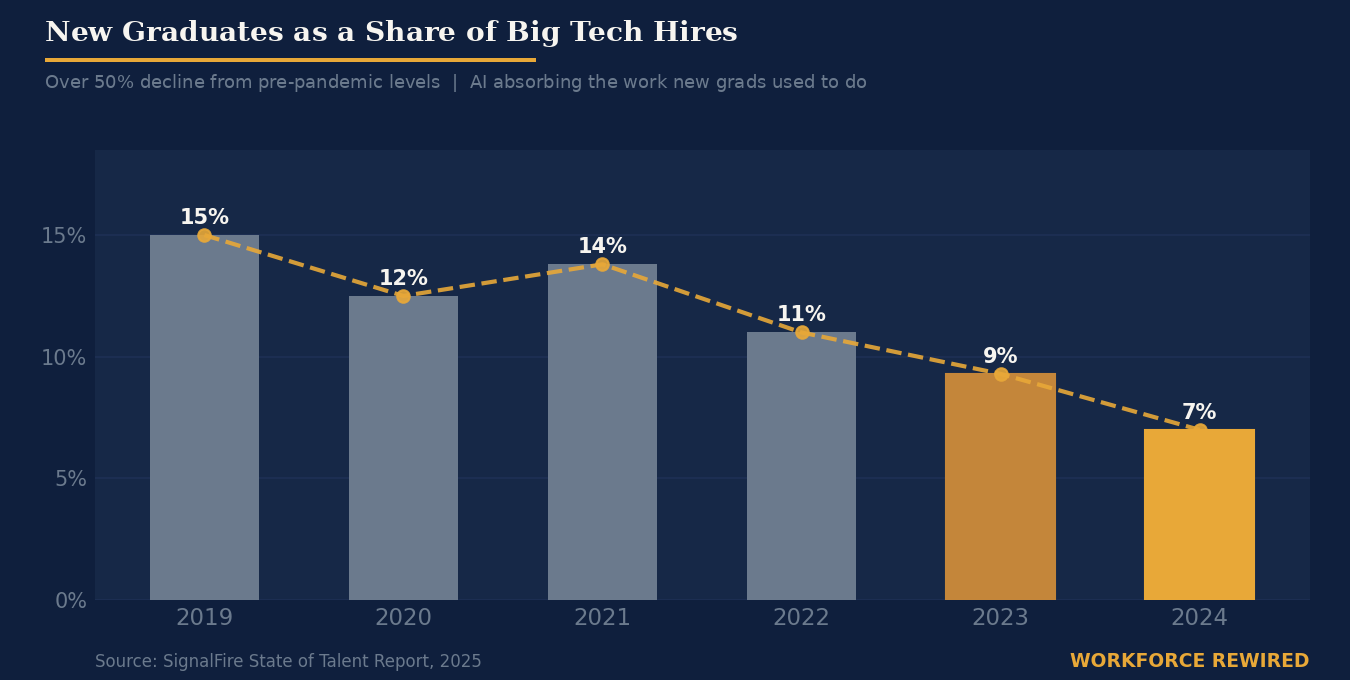

SignalFire’s 2025 State of Talent Report analyzed hiring patterns across major tech companies and found that new graduates now represent just 7% of hires at Big Tech firms, down from roughly 15% in 2019. That is more than a 50% decline in five years. At startups, the picture is similar: new grads made up 30% of hires in 2019, and today the figure sits under 6%.

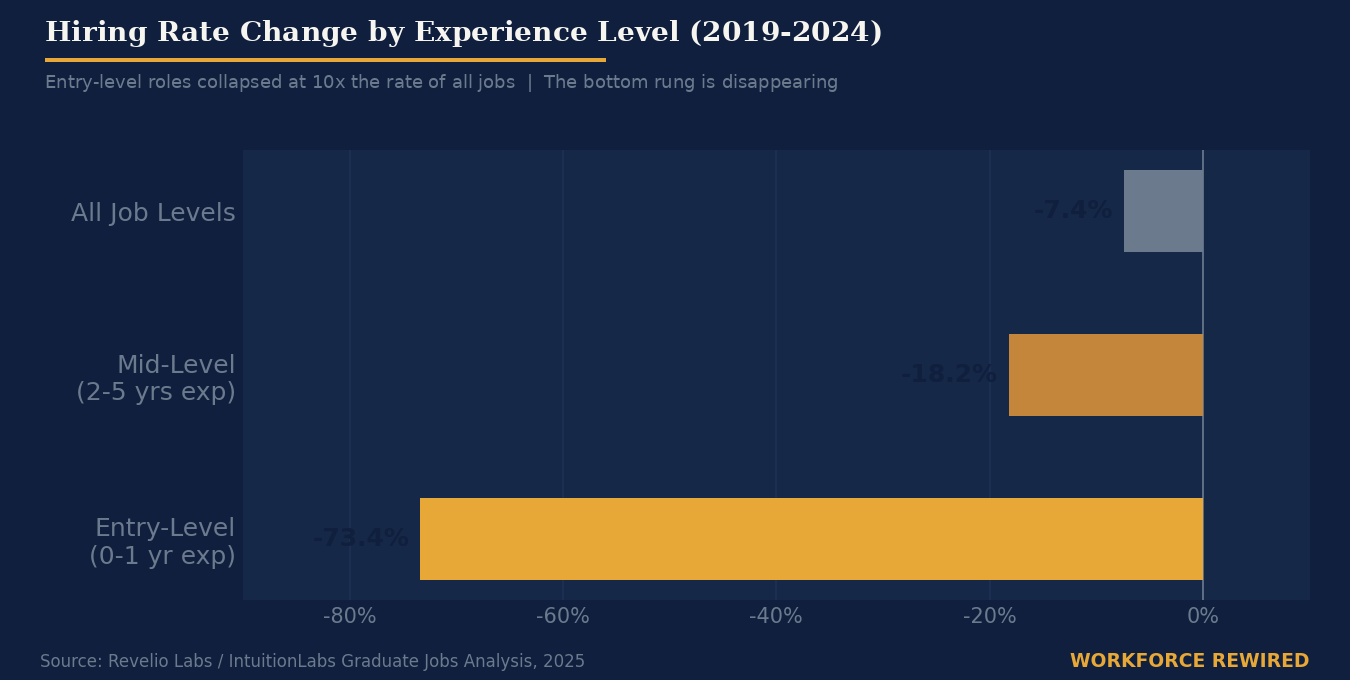

That alone would be striking. But the more telling data point comes from looking at what is happening at the level of specific job categories. Research compiled by IntuitionLabs found that entry-level positions, defined as roles requiring less than one year of experience, have seen hiring rates fall by 73.4% since 2019. All job levels combined? Down 7.4%. This indicates the entry-level contraction is not a reflection of an overall hiring slowdown. It is something specific happening to the bottom of the career ladder.

TechCrunch reported in May 2025 on research suggesting AI is already materially shrinking entry-level roles in tech, with SignalFire’s head of research describing “convincing evidence” that AI capabilities are a significant driver of the decline. And Anthropic CEO Dario Amodei said publicly that AI could wipe out half of all entry-level jobs within five years, a projection he acknowledged is not on most people’s radar.

By 2025, the downstream effects were landing on graduates themselves. CNBC reported in November 2025 that just 30% of the Class of 2025 secured full-time jobs in their fields, down from 41% for the Class of 2024. Companies are skipping new graduates and hiring mid-level talent with two to five years of experience instead, a trend Fortune documented in August 2025, noting Big Tech increased hiring of workers with two to five years of experience by 27% even as new grad hiring collapsed.

What Entry-Level Work Actually Was

Here is what I think gets missed when we talk about this as a labor market story.

Entry-level work was never really about the output. It was about the learning.

When a junior analyst spent three months building PowerPoint decks for a managing director, they were not doing it because the managing director could not have made the deck faster. They were doing it because, in the act of building that deck, they were learning which numbers the client actually cared about, how the firm framed problems, what the senior people worried about when no one was watching, how decisions actually got made. The menial task was the delivery mechanism for something that had no other delivery mechanism: business context.

Editor’s note: as a recovering consultant, this is what strikes me most - I fully see the things I cut my own proverbial corporate teeth on disappearing with the advent of AI. The example above isn’t hypothetical. This was very much my reality ~20 years ago.

The same logic held everywhere. Junior developers who spent six months fixing bugs in a legacy codebase were not wasting time. They were learning the architecture, the technical debt, the history of decisions that produced that debt, and the names of the people who had made those decisions. Junior marketers who spent a year manually pulling campaign data were learning what metrics the business actually cared about, and why the dashboard numbers sometimes told a different story than the conversations in the hallway.

This is the apprenticeship model, and it is as old as knowledge work itself. You earn business context by doing work at the frontier of your capability while being adjacent to people who already have it. Context is contagious, but only through proximity and practice.

AI does not just do the task. It severs that transmission.

When an LLM pulls the campaign data, writes the first draft, builds the preliminary analysis, and summarizes the meeting notes, the human who reviews the output is consuming context, not building it. That is a fundamentally different cognitive experience. Review is not the same as construction. And a junior professional who spends their early years reviewing AI output rather than doing foundational work is not accumulating the same kind of understanding, even if their technical output is superficially equivalent.

If I never got to sit in the meeting rooms taking the notes or doing the data cleanup or drafting the PowerPoint deck and getting loops of feedback, I would have never honed a craft or learned business context. How is that replicated when the human is no longer needed in that same way?

The Pipeline Problem Nobody Is Fully Accounting For

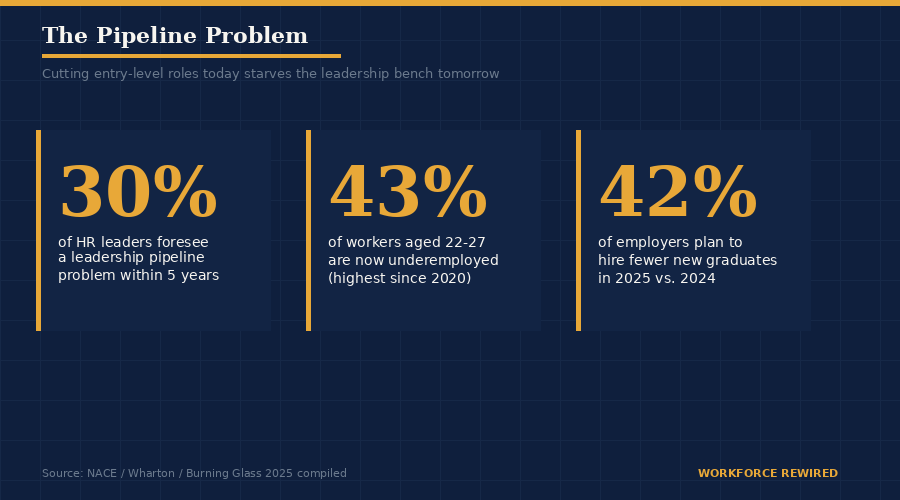

Wharton’s research on the vanishing talent pipeline put the organizational risk plainly: companies automating entry-level work today are starving their own talent pipelines for tomorrow. Thirty percent of HR leaders already foresee a leadership pipeline problem within five years. Fifteen percent anticipate increased costs from over-reliance on expensive experienced hires. Eleven percent foresee the loss of institutional knowledge transfer that used to happen naturally through the career ladder.

Meanwhile, the downstream consequences for young workers are showing up in economic statistics. Burning Glass Institute data shows that nearly 43% of workers aged 22 to 27 are now underemployed, the highest rate since 2020. These are not people who lack ambition or credentials. They are people who arrived at the entry point of the career ladder and found the bottom rung had been removed.

The social mobility implications of this shift deserve more attention than they are getting. The traditional entry-level role was one of the few reliable mechanisms for talented people from non-elite backgrounds to gain access to high-value professional environments. You did not need a network to get an analyst role at a consulting firm. You needed to be diligent and smart. The network came from doing the work alongside the people who had it. When the entry points close, that mechanism closes with them, and the resulting concentration of opportunity at the top of the pipeline is not a coincidence. It is an architectural outcome.

What Employers Are (and Are Not) Doing

The honest answer is that most companies have not thought this through.

The instinct driving entry-level job cuts is largely rational at the individual company level. If AI can handle the tasks a junior analyst used to do, the cost case for not automating is hard to make. The problem is that the rational move for each individual company, aggregated across the economy, produces an irrational collective outcome: a workforce pipeline that is not being replenished.

A small number of companies are experimenting with what might be called contra-hiring, deliberately maintaining entry-level programs even where the immediate task productivity case may be weaker, because they understand the long-term human capital argument. Take IBM, for example. They’ve committed to tripling their entry level hiring this year, citing a goal to buffer their long-term leadership pipeline.

What the research does suggest is beginning to emerge is a new model for entry-level work, one where the role is redesigned around augmentation rather than replacement. SSIR’s analysis of AI and entry-level jobs describes organizations that are restructuring junior roles to combine AI tool orchestration with mentorship and learning, building projects that require junior employees to work alongside AI systems rather than simply being displaced by them. The hypothesis is that the new core competency for early-career workers is not doing the foundational task but directing, validating, and contextualizing the output of AI doing it. That is a coherent framing. Whether it produces the same depth of business context formation as the old model remains genuinely unknown.

Universities: Trying to Respond at the Wrong Speed

The institutions most directly responsible for preparing people for this moment are operating on a timeline that is structurally mismatched with the speed of the problem.

Undergraduate AI degree programs in the U.S. grew 114% between 2024 and 2025, from 90 to 193 programs. That is real movement. But the curriculum revision cycles at most institutions run three to five years, and the pace of AI capability change is outrunning that by an order of magnitude. Universities are updating what they teach. The harder and largely unaddressed question is how the career formation model changes when the foundational work that used to happen at the first employer is no longer available.

The Association of American Colleges and Universities is running a structured eight-month program for faculty teams to redesign AI-integrated curricula, which is a serious institutional response. A handful of universities are experimenting with work-integrated learning models and apprenticeship-degree hybrids that try to create the business context formation in the academic environment itself. UC Santa Barbara’s experiential learning initiative is one example of the logic: if employers are no longer providing the apprenticeship, universities need to build something that approximates it. Whether structured classroom projects can replicate the kind of contextual learning that comes from being embedded in a real organization under real pressure is, again, an open question.

The most intellectually honest framing I have heard is this: universities are being asked to solve a problem that employers created, at a pace the academic model was not designed for, with accountability for outcomes they were never historically responsible for. That is not a reason to give up on curriculum redesign. It is a reason to be realistic about what universities can and cannot do alone.

The Question No One Can Answer Yet

I want to be honest about what we do not know, because this conversation is full of confident assertions that are not warranted by the evidence.

We do not know whether reviewing and directing AI output will, over time, produce equivalent business context formation to doing foundational work directly. It might. The skills required to effectively prompt, validate, and synthesize AI-generated analysis are real skills. The question is whether they produce the same depth of organizational understanding and business context that comes from years of foundational practice, and we genuinely do not have longitudinal evidence yet because the timeline is too short.

We do not know whether redesigned entry-level roles at progressive companies will be adopted broadly enough to offset the systemic contraction in traditional entry points. The economics of automation are compelling at the individual firm level, and collective action problems do not resolve themselves.

We do not know whether the university adaptations underway are moving fast enough or are well-calibrated enough to serve as a substitute for the employer-side apprenticeship model. Experiential learning programs and work-integrated degrees are promising. Whether they produce leaders in twenty years with the same depth of contextual judgment as leaders who came up through traditional career ladders is unknowable right now.

What we do know is this: the career formation system that produced most of the senior leaders in today’s organizations was built on a scaffolding of foundational work that no longer exists at the scale it once did. We are, in real time, running an experiment on whether knowledge work can produce its own next generation without that scaffolding. The results will not come in for another decade or more.

That is a long time to wait to find out whether we built the architecture correctly.

What Should Actually Happen

I will end with where I think the institutional responsibilities actually lie, based on what I have seen in practice and what the research supports.

Organizations that are cutting entry-level roles need to recognize they are making a decision with a ten-year consequence, not a one-year cost optimization. The organizations that maintain deliberate entry-level programs, even where the immediate productivity case is weaker than automation, are building a long-term human capital advantage. That case needs to be made explicitly at the board and executive level, where the trade-off is often invisible because the pipeline consequence does not show up in any near-term metric.

Universities need to stop treating curriculum revision as their primary obligation and start treating career formation infrastructure as a co-equal one. Work-integrated learning is not a nice-to-have. It is the mechanism by which business context formation happens when the employer-side model is contracting. That requires deeper employer partnerships, longer time horizons, and accountability for outcomes that most institutions have never been asked to track.

Governments need to move from transparency to intervention. Knowing how many layoffs are AI-attributable is useful. Incentive structures that make it economically rational for companies to maintain entry-level pipelines, similar to the apprenticeship tax credit models operating in the UK and Germany, are the actual intervention. The U.S. does not have a coherent version of this yet.

And the workers who are navigating this right now, the 2024 and 2025 and 2026 graduates entering a labor market that has contracted at the entry point, need honest guidance rather than reassuring abstractions. The skills that matter most in this environment are not the ones that AI is best at. They are judgment, contextual reasoning, relationship navigation, and the ability to work across ambiguity. Those skills take time and practice to develop. They require access to environments where that development can happen. They are core human skills.

The entry-level crisis is not really about job counts. It is about what we have always known about how people become good at complex work, and the institutional question of who is now responsible for making that happen.

We are early in the experiment. It would be a significant mistake to assume it resolves itself.

Next issue: The GCC Question: as companies build out global capability centers to house AI-adjacent work, what does the new geography of knowledge work actually look like, and who benefits?

If this resonated, share it with someone in your organization who needs to read it. And if you are working on this problem from inside a company, a university, or a policy organization, I would genuinely like to hear from you: christina@workforcerewired.co