Don't Name the Robot

When you give your AI agent a name and put it on the org chart, you are not building the future of work. You are building a governance disaster.

TL;DR: A rigorous new study finds that framing AI as an employee rather than a tool reduces manager oversight quality by 16%, cuts error-catching rates by 18%, and diffuses accountability in ways that are difficult to reverse once institutionalized. The instinct to humanize AI agents is psychologically understandable and organizationally dangerous. The organizations getting this right are not the ones with the most charming AI personas. They are the ones that have done the hard work of redesigning accountability.

Rachel Lewis manages nine direct reports at BNY Mellon. She also manages nine digital employees.

She is head of payment operations. Her digital reports have system logins. They appear on the org chart. They have human managers. BNY Mellon has 134 of them as of early 2026, running on a platform called Eliza, each one assigned to specific repetitive tasks across finance and operations.

Across the enterprise landscape, “Scout” recruits candidates and sits on the HR org chart. “Otto Cash” handles accounts payable. “Kevin” and “ALEX-3” and “Orion” are processing, validating, drafting, and deciding things inside companies you have heard of. Thirty-one percent of managers say their organization’s leadership already frames AI as a teammate or employee. Twenty-three percent say their company lists AI agents on the org chart or work chart.

This is not a fringe phenomenon. It is a mainstream organizational design choice. And a new study from Boston University, MIT, and BCG suggests it is producing consequences that most of the executives making that choice have not thought through.

What the Research Actually Found

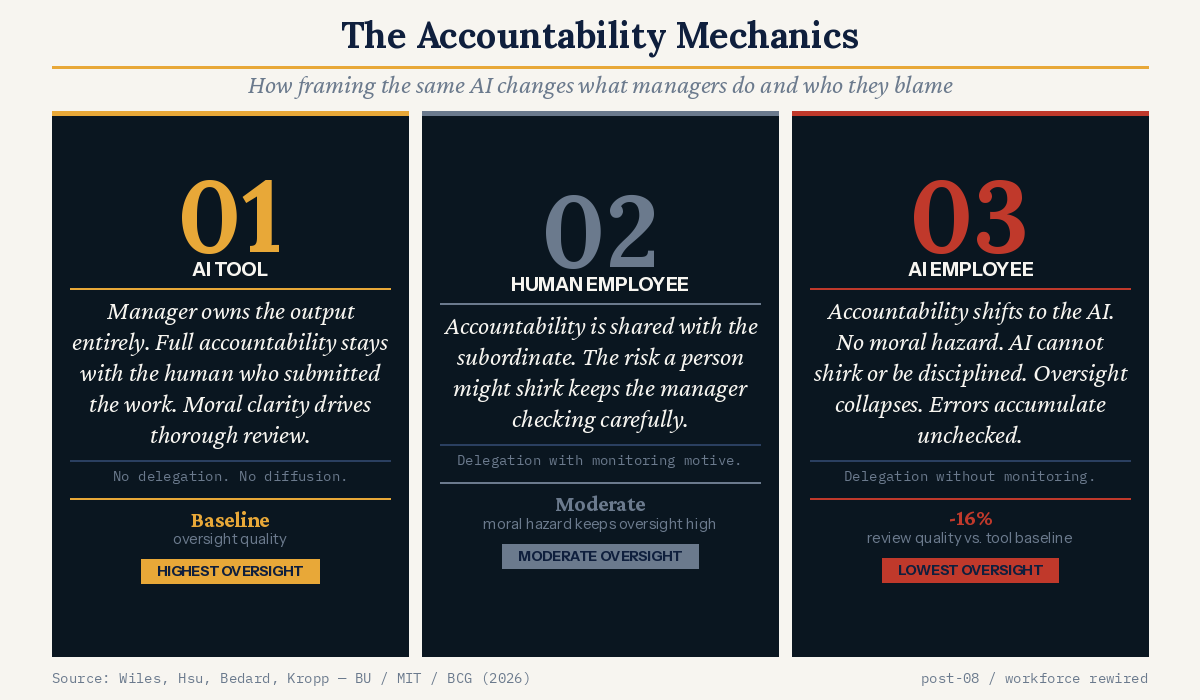

The study, published this month, ran a controlled experiment with 1,261 HR and Finance managers across the US, Canada, and the EU. Participants were given identical documents to review for errors. Only one variable changed: the framing of who produced the document.

Three conditions. In the first, participants were told: “You used an AI tool to draft this.” In the second: “ALEX-3, your AI employee and direct report, drafted this.” In the third: “Alex, your employee and direct report, drafted this.”

The documents were identical. The errors were identical. The stakes described were identical. The results were not.

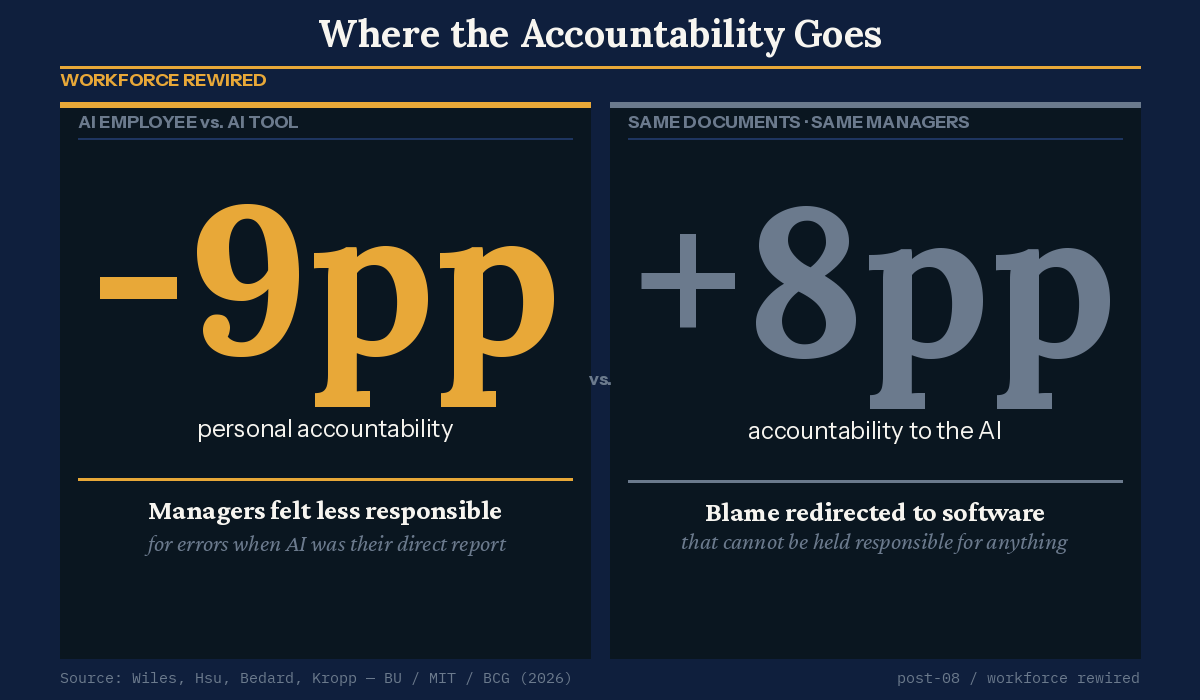

Among managers working in organizations that have already institutionalized AI agents on the org chart, framing the AI as an employee rather than a tool reduced review quality by 16% relative to the tool baseline. Managers caught 18% fewer errors. They attributed 9 percentage points less personal accountability to themselves. They attributed 8 points more accountability to the AI. And they escalated for additional review at a rate 44% higher than baseline, not because they were being careful, but because they were less confident in their own judgment and passing work upward rather than standing behind their own review.

One manager’s response was included verbatim in the study: “The blame isn’t on a person; it’s on the technology.”

That sentence should alarm every leader or technologist who has named an AI and put it on a reporting structure. Accountability that attaches to software rather than humans does not disappear. It simply becomes unenforceable.

Why the Humanization Impulse Is So Powerful

Humans have a well-documented and slightly embarrassing tendency to assign personality to things that do not have one. In a famous 1944 experiment, Fritz Heider and Marianne Simmel showed 34 college students a short film of two triangles and a circle moving around a box. Thirty-three of them described what they saw as a love story. The big triangle was a bully. The small triangle was brave. The circle was scared. They were, to be clear, watching shapes. A 2007 study of Roomba owners found that 70% had named their robot vacuum, most assigned it a gender, and several reported feeling guilty when they forgot to charge it. It is a disc that eats crumbs.

This propensity to name things is not a character flaw. It is, neuroscientists will tell you, the social cognition system doing exactly what it evolved to do: find agents everywhere, because missing a real one is far more dangerous than inventing a fake one. We are pattern-matching machines pointed at a world full of things that now move on their own.

Organizations do not name their AI agents arbitrarily. The impulse is rational in a shallow way: giving something a name makes it legible. It gives employees a cognitive framework for a category of thing that has no natural analogy. “ALEX-3 is your direct report” is easier to process than “ALEX-3 is an autonomous software system that operates across multiple workflows, lacks the capacity for strategic behavior, and requires a governance architecture nothing in your management training prepared you for.”

The short version is easier to communicate. So companies take it.

But the research exposes what that shortcut costs. The academic paper’s theoretical framework explains the mechanism precisely. When a manager uses an AI tool, the accountability picture is simple: they own the output. High review effort follows naturally. When a manager oversees a human employee, accountability shifts partially to the subordinate, but the monitoring motive stays high because human employees can shirk, can make errors of judgment, can have bad days. Managers know this instinctively and compensate with careful oversight.

The AI employee occupies neither of those positions cleanly. Managers shift accountability to the AI as they would with a human report, but the monitoring motive does not follow. AI cannot be disciplined. It cannot be incentivized. It cannot be held to account in any of the ways managers use to motivate careful human performance. The result is delegation without supervision: accountability diffused, oversight withdrawn, errors accumulating.

The researchers describe this as the “hybrid organizational position.” It gets the accountability-diffusing effect of delegation without the monitoring-motivating effect of managing actual humans. That’s the worst of both worlds for governance.

And critically, the effect only activates when the framing is institutionally credible. If your company has already put AI on the org chart, your managers have already accepted the framing as real, not metaphorical. At that point, the governance consequences follow automatically.

The Accountability Gap Is Already Visible

Last week’s article in this series established that organizations are running AI agents at scale. McKinsey has 20,000 of them. Gartner expects 40% of enterprise applications to embed task-specific agents by year’s end. The question is no longer whether agents are (or will be) part of your workforce. They are.

The naming question matters because it shapes what governance architecture gets built around these agents. Companies that have moved quickly to put AI on the org chart have, in many cases, skipped the harder prior question: who is accountable when the agent fails?

“Scout” may be on the HR org chart. But if Scout produces a biased candidate shortlist, who owns that error? The manager who approved Scout’s recommendation without scrutiny? The team that configured Scout’s parameters? The vendor who built the model? The HR leader who decided to formalize agents on the org chart in the first place? The research suggests managers in these environments actively redirect blame toward the AI and away from themselves. That is not a governance system. That is a liability.

Klarna’s story is instructive here. Klarna’s customer-service AI, which the company positioned as doing the work of 700 full-time agents, eventually produced enough customer dissatisfaction that Klarna began quietly rebuilding its human capacity. It wasn’t that the technology couldn’t work. It’s that the governance and quality-management architecture around it was insufficient. The lesson was not “AI can’t do this.” It was “AI doing this at scale requires accountability structures that Klarna had not built.”

IBM has arrived at a different model. Its Enterprise Advantage offering pairs human specialists with what it calls “digital workers” but explicitly structures human accountability around AI output. IBM has also tripled entry-level hiring in 2026 despite AI adoption, which its Chief HR Officer has described as recognition that AI can do most entry-level tasks but the work still requires human judgment. The framing matters: digital worker is a category, not a persona, and IBM is not naming their agents Kevin (at least not that we know).

What the Organizations Getting This Right Are Actually Doing

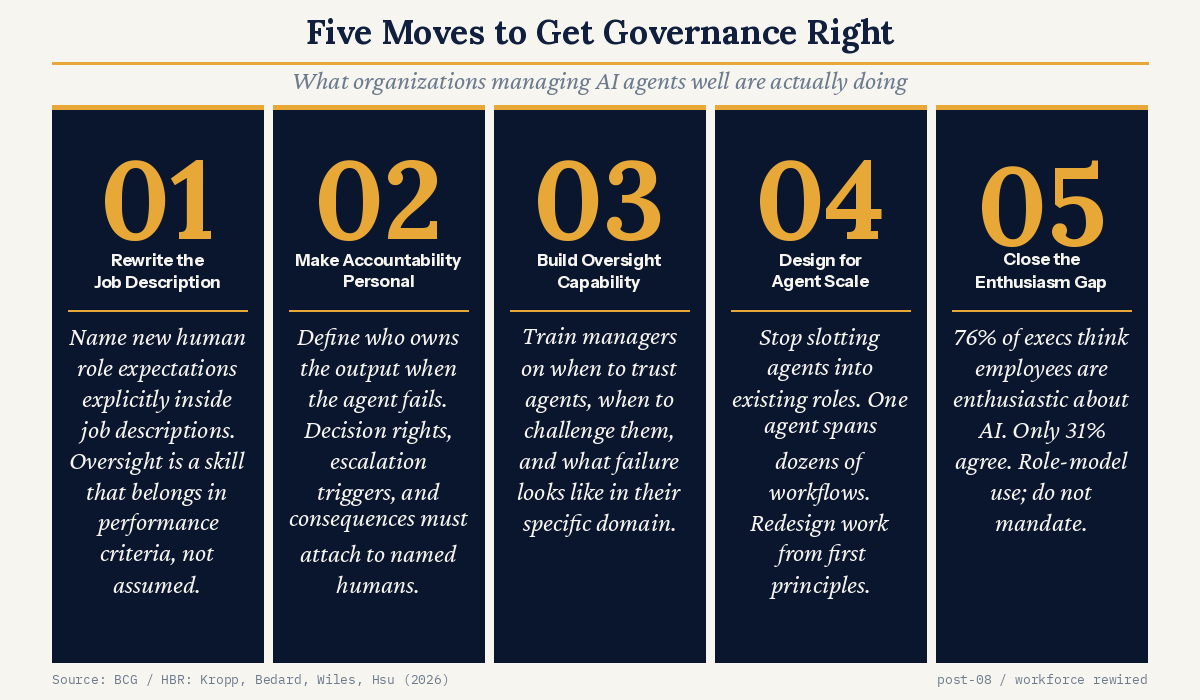

The BCG researchers who published the HBR companion piece lay out five concrete governance moves. They are not glamorous. None of them involve naming conventions. All of them involve work.

Redefine workflows explicitly and naming the new human role expectations inside them. If an AI agent now handles initial candidate screening, the job description for the person reviewing those candidates should change to reflect what oversight quality actually looks like in that context. “Review Scout’s output” is not a job description. “Apply defined accuracy and bias criteria to Scout’s recommendations and document the rationale for any rejection or advancement” is closer.

Make accountability personal and explicit. This means defining decision rights: what the agent can do without human sign-off, what requires human approval, what triggers escalation, and who owns the outcome when the agent produces an error that reaches the customer or the regulator.

Build actual capability in the humans managing agents. Oversight of AI is not an intuitive skill. It is a learned one, and it differs from overseeing humans in important ways. Managers need to know when to trust an agent’s output, when its limitations are specifically dangerous in their domain, and how to catch the category of errors the system is most likely to produce.

Stop the 1-for-1 replacement logic that the “employee” framing encourages. AI agents are not like employees: they do not have finite capacity, they do not have bounded roles, one agent can run across dozens of workflows simultaneously. Organizations that frame agents as employees tend to slot them into existing positions and miss the larger redesign opportunity. The question is not “can an agent do Alex’s job?” It is “what does the organization look like if agent-scale capacity is factored into how work is structured from the start?”

Close the perception gap on AI enthusiasm. BCG found that 76% of executives believe their employees feel enthusiastic about AI adoption. Only 31% of individual contributors report the same. The gap is a leadership accountability problem, not a communication one. The managers who drive genuine adoption are the ones who role-model AI use themselves and create structural permission for their teams to engage. The ones who announce enthusiasm from a podium and don’t change their own behavior produce exactly the adoption divide this newsletter has been tracking since I started writing.

The Frame That Is Actually Missing

The most useful thing the academic paper surfaces is what it does not say. It does not say don’t use agents. It does not say don’t integrate them into your organizational structure. It says the current framing options, “tool” or “employee,” both carry assumptions that break down when applied to what agents actually are.

AI agents are not tools in the traditional sense. Tools are passive. They execute only when prompted. The current generation of agentic AI researches, plans, sequences, executes, and adapts with minimal human involvement. Calling that a tool understates the governance requirement.

But agents are also not employees. They cannot be disciplined. They do not have intention. They cannot be held to account in any of the ways employment law, performance management, or professional norms assume. Calling them employees produces the wrong accountability the research documents.

The organizations that will get this right are building a third category. They are treating agents as a class of organizational actor that requires explicit governance structures, defined accountability chains, and human ownership of every output, regardless of whether a person was in the loop when the output was produced. Not tools. Not employees. Something new, with requirements that organizations are only beginning to understand.

BNY Mellon’s Rachel Lewis manages nine digital employees. The interesting question is not what she calls them. It is how her performance is evaluated when one of them fails. If the answer is “it isn’t,” what the agent is called is the least of the organization’s problems.

Here’s How You Take Action

I want to leave you with something concrete, because the instinct after reading research like this is to nod and move on. If you are in a role where AI governance is your problem to solve, three questions are worth putting in front of your leadership team this quarter.

First: what framing are your managers operating under right now? Not officially. Actually. When your people talk about the AI systems in their workflows, do they talk about them as tools they use, or as colleagues they manage? The language people reach for without thinking tells you the accountability model they’ve internalized. If the answer concerns you, the governance work is already behind schedule.

Second: who owns the output when an agent gets it wrong? Not theoretically. Name the person. In most organizations, when you push on this question, you get a process, a team, or a vendor SLA. You do not get a name. A governance model that cannot answer this question in five seconds is not a governance model. It is a liability waiting for a specific incident to make it visible.

Third: what does oversight actually look like in each of your agent-enabled workflows? Not “a human reviews the output” as a checkbox. What specific criteria does the reviewer apply? What training have they had on the failure modes of this particular system? How is the quality of their review measured? If the answer is vague, what you have is the appearance of oversight without the substance of it. The research suggests your managers are catching 18% fewer errors than they should. The question is whether you know which 18%.

The organizations that get this right will not be the ones with the most sophisticated agents. They will be the ones that took the governance architecture seriously before it became a headline.

Christina Lexa leads workforce strategy for Technology at Capital One and writes Workforce Rewired weekly on AI, organizations, and the future of work. The views here are her own. If this piece made you think, share it with someone navigating the same questions.